ETL vs ELT in 2025: Which Approach for Your Data Warehouse?

Understanding the differences between ETL and ELT in 2025. Pros, cons, use cases and guide to choosing the right approach for your modern data architecture.

In 2025, the data integration landscape has significantly evolved, with major changes in the adoption of ETL and ELT approaches. The optimal choice will depend more than ever on infrastructure, data volumes, flexibility requirements, and compliance needs.

Understanding ETL and ELT

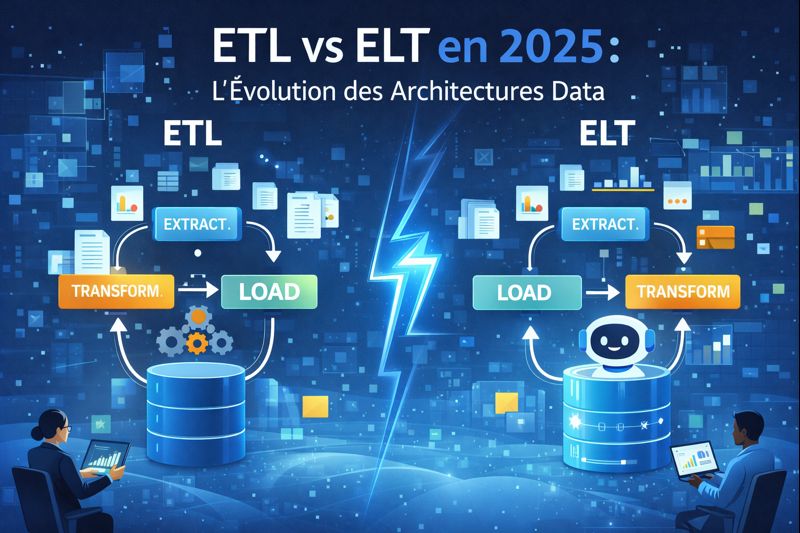

These two methods are fundamental for data integration into a Data Warehouse, but their distinction lies in the order of operations:

- ETL (Extract, Transform, Load): Data transformation occurs BEFORE loading into the Data Warehouse.

- ELT (Extract, Load, Transform): Data transformation occurs AFTER loading (raw data) into the Data Warehouse.

Major Changes and Evolutions in 2025

Several key factors have redefined the relevance of each approach:

1. ELT Becomes the New Default Standard

The most significant change is that ELT has established itself as the default standard for modern data architectures in 2025.

Driver of change: The rise of cloud Data Warehouses (such as Snowflake, Google BigQuery, Amazon Redshift, Azure Synapse, and particularly Microsoft Fabric) with their immense massively parallel processing (MPP) computing power has made ELT faster, more scalable, and more flexible.

Key advantages of ELT:

- Speed: Direct loading of raw data, without intermediate bottlenecks.

- Scalability: Native exploitation of cloud DWH computing power.

- Flexibility: Preservation of raw data allowing reprocessing or new analyses at any time.

- Access to raw data: Essential for Machine Learning and advanced analytics.

2. The Specific and Negotiated Role of ETL

While ELT dominates new architectures, ETL is not disappearing. Its role has become more targeted and specific:

- Legacy Systems (On-Premise): ETL remains relevant for integrating data from traditional Data Warehouses or on-premise systems that lack the computing power of modern cloud solutions.

- Highly Sensitive Data and Strict Compliance: For sectors like healthcare or finance, where regulations (e.g., GDPR) require transformation (masking, anonymization) before final data storage, ETL is still preferred. It ensures that only compliant and de-identified data enters the DWH.

- Small Volumes: For modest data volumes, the overhead and cost of cloud ELT may not be justified.

3. The Dominant Emergence of the Hybrid Approach

In 2025, the most common strategy for organizations is an intelligent combination of both approaches:

- ELT for modern analytics: Using ELT for the majority of data, loading it raw into a Data Lake (for example, in Microsoft Fabric's OneLake) and performing transformations with powerful tools like dbt.

- ETL for legacy and compliance: Reserving ETL for existing systems or cases where compliance requires prior transformation of sensitive data (PII).

Example with Microsoft Fabric: This platform perfectly illustrates the hybrid approach, allowing ELT ingestion via Data Factory, ELT transformations with Spark Notebooks or Dataflows Gen2 in the Lakehouse, and specific ETL pipelines for highly sensitive data.

4. The Evolution of Tools and Ecosystem

The tool landscape has followed this evolution:

- ELT: dbt (data build tool) has become the de facto standard for transformations within the Data Warehouse. Platforms like Fivetran or Airbyte facilitate raw data ingestion.

- Unified platforms: Solutions like Microsoft Fabric are designed to natively manage ELT workflows and facilitate the adoption of hybrid architectures, integrating ingestion, storage, and transformation in a coherent environment.

- ETL: Historical tools like Talend, Informatica PowerCenter, SSIS, or Apache NiFi continue to be used, particularly for specific ETL use cases.

Detailed ETL vs ELT Comparison (2025 Summary)

| Criteria | ETL (Specific Role) | ELT (New Standard) |

| Loading speed | Slow (prior transformation) | Fast (direct loading of raw data) |

| Flexibility | Low (costly reprocessing) | High (raw data preserved) |

| Required storage | Optimized (final data only) | Larger (raw + transformed data) |

| Infrastructure cost | Dedicated ETL server (potentially) | Included in cloud DWH power |

| Raw data | Not preserved in DWH | Preserved (valuable for future analyses) |

| GDPR compliance | Easier (prior transformation) | Must be managed carefully in DWH (access management) |

| Machine Learning | Often pre-aggregated data | Access to raw data (ideal for ML) |

| 2025 use cases | Legacy, highly sensitive data | Cloud DWH, Big Data, Advanced Analytics, Agility |

Conclusion

In 2025, ELT is undoubtedly the standard for modern data architectures, fully leveraging the power and flexibility offered by cloud Data Warehouses. However, ETL maintains crucial relevance for specific use cases, particularly managing legacy systems and strict compliance around sensitive data. Most organizations will adopt a hybrid strategy to optimize their data pipelines.